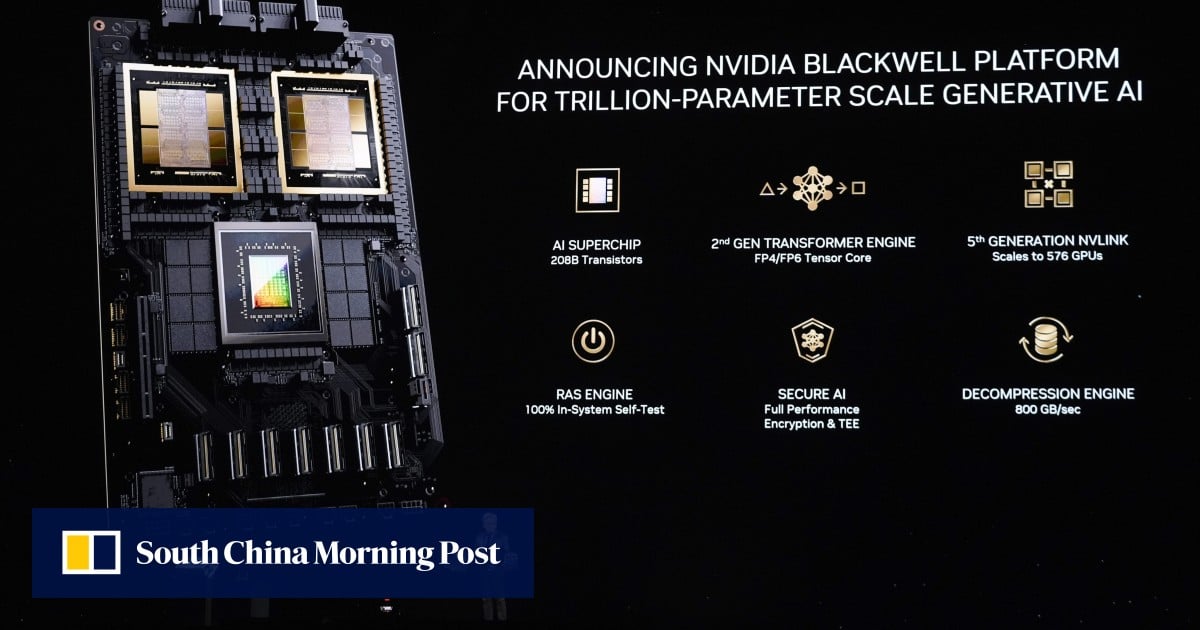

19 Mar Nvidia unveils new AI ‘superchip’ line-up Blackwell, succeeding dominant H100 chip banned from sale to China

A new processor design called Blackwell is multiple times faster at handling the models that underpin AI, the company said at its GTC conference in San Jose, California. That includes the process of developing the technology – a stage known as training – and the running of it, which is called inference.

China said to fall short of matching US advances in AI amid major challenges

China said to fall short of matching US advances in AI amid major challenges

Blackwell – named after David Blackwell, the first Black scholar inducted into the National Academy of Science – has a tough act to follow. Its predecessor, Hopper, fuelled explosive sales at Nvidia by building up the field of AI accelerator chips. The flagship product from that line-up, the H100, has become one of the most prized commodities in the tech world – fetching tens of thousands of dollars per chip.

The growth has sent Nvidia’s valuation soaring as well. It is the first chip maker to have a market capitalisation of more than US$2 trillion and trails only Microsoft and Apple overall.

The announcement of new chips was widely anticipated, and Nvidia’s stock is up 79 per cent this year through Monday’s close. That made it hard for the presentation’s details to impress investors, who sent the shares down about 1 per cent in extended trading.

Huang, Nvidia’s co-founder, said AI is the driving force in a fundamental change in the economy and that Blackwell chips are “the engine to power this new industrial revolution”.

Nvidia is “working with the most dynamic companies in the world, we will realise the promise of AI for every industry”, he said at Monday’s conference, the company’s first in-person event since the pandemic.

Blackwell will also have an improved ability to link with other chips and a new way of crunching AI-related data that speeds up the process. It’s part of the next version of the company’s “superchip” line-up, meaning it’s combined with Nvidia’s central processing unit called Grace. Users will have the choice to pair those products with new networking chips – one that uses a proprietary InfiniBand standard and another that relies on the more common Ethernet protocol. Nvidia is also updating its HGX server machines with the new chip.

The Santa Clara, California-based company got its start selling graphics cards that became popular among computer gamers. Nvidia’s graphics processing units, or GPUs, ultimately proved successful in other areas because of their ability to divide up calculations into many simpler tasks and handle them in parallel. That technology is now graduating to more complex, multistage tasks, based on ever-growing sets of data.

Blackwell will help drive the transition beyond relatively simple AI jobs, such as recognising speech or creating images, the company said. That might mean generating a three-dimensional video by simply speaking to a computer, relying on models that are have as many as 1 trillion parameters.

For all its success, Nvidia’s revenue has become highly dependent on a handful of cloud computing giants: Amazon, Microsoft, Google and Meta Platforms. Those companies are pouring cash into data centres, aiming to outdo their rivals with new AI-related services.

The challenge for Nvidia is broadening its technology to more customers. Huang aims to accomplish this by making it easier for corporations and governments to implement AI systems with their own software, hardware and services.

Huang’s speech kicks off a four-day GTC event that’s been called a “Woodstock” for AI developers. Here are some of the highlights from the presentation:

Huang concluded the event by having two robots join him on stage, saying they were trained with Nvidia’s simulation tools.

“Everything that moves in the future will be robotic,” he said.